SINGAPORE – Is it ethical to publish artificial intelligence-generated content based on a celebrity’s past interviews?

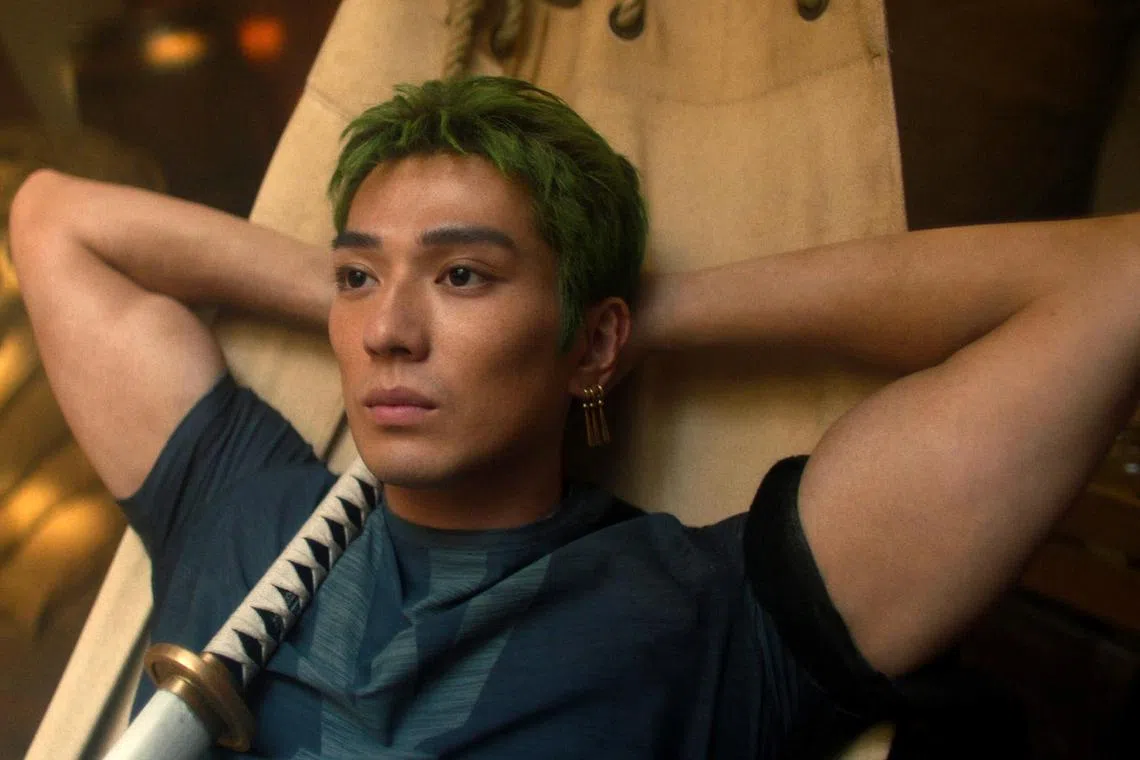

The public backlash over Esquire Singapore’s AI-generated interview with Netflix live-action series One Piece’s star Mackenyu seems to suggest not.

The lifestyle magazine explained that its decision to use AI to fabricate interview responses came after the actor failed to respond to questions in time for publication.

What other AI application blunders have occurred in recent times that should not be repeated? The Straits Times takes a look at nine high-profile ones.

For Esquire Singapore’s March 2026 issue, Japanese-American actor Mackenyu, who goes by just this name, had taken part in a cover photo shoot but was unable to reply e-mail interview questions. To fill the gap, the editorial team uploaded past interviews done by the celebrity onto AI tools Claude and Copilot, and generated fresh responses to new questions.

The piece drew swift backlash, especially over AI-generated comments about his relationship with his late father, which were later removed.

Critics called it lazy and unethical journalism, while Esquire defended it as a deliberate creative choice tied to the issue’s theme, saying it had noted the feedback for future editorial decisions.

In March 2026, two lawyers were ordered to pay $5,000 each after two fictitious cases were cited in closing submissions for a civil dispute.

Lead lawyer Goh Peck San from P S Goh & Co had hired Mr Amarjit Singh Sidhu from Amarjit Sidhu Law to prepare a draft of the closing submissions. Mr Singh then passed the task on to a paralegal. The paralegal had left the law firm in July 2025 and was uncontactable.

Neither lawyer knew that AI tools had been used in preparing the draft, and the fabricated citations were uncovered only after the opposing counsel flagged them in reply submissions.

The case marks Singapore’s second reported instance of fake AI-generated legal citations.

In October 2025, lawyer Lalwani Anil Mangan from DL Law Corporation was fined $800 after a non-existent case generated by AI was found in his court documents.

Justice S. Mohan warned that such mistakes pose a serious threat to the administration of justice.

Freelance author and journalist Alex Preston used an AI tool to draft a January 2026 book review for The New York Times, but it ended up incorporating phrases and descriptions from a Guardian review published in August 2025.

The similarities were spotted by a reader, prompting the American newspaper to investigate Preston, who had written six reviews for the publication between 2021 and 2026.

Although he said AI was used only in the latest piece, the Times said it would stop working with him as of March 2026. The publication said that Mr Preston’s reliance on AI and unattributed material breached the paper’s editorial standards.

Mr Preston, who is also the author of several books, apologised to the Times and the Guardian, as well as to the writer of the Guardian book review.

In early November 2025, Kumma the AI teddy bear sold by a Singapore firm alarmed testers after the toy discussed explicit sexual content and gave advice on where to find knives, pills, matches and plastic bags at home. The US$99 (S$130) toy reportedly veered into graphic sexual content after users triggered it with the word “kink”.

Singapore-based company FoloToy pulled the toy from shelves and spent a week tightening its content-moderation and child-safety guard rails before gradually restoring sales.

A July 2025 report by financial services firm Deloitte Australia was found to contain a fabricated quote from a federal court judgment and references to non-existent academic studies.

The discovery forced Deloitte to refund the Australian Department of Employment and Workplace Relations more than 20 per cent of A$440,000 (S$396,000) the latter paid to commission the report.

After the errors were flagged by a Sydney University researcher of health and welfare law, Deloitte published a revised version of the report in October 2025. It also disclosed that it had used Azure Open AI in writing the report.

In August 2025, Australian independent barrister Rishi Nathwani apologised after court submissions in a teenage murder case were found to contain fabricated quotes and non-existent case judgments generated by AI.

The false material, filed in the Supreme Court of Victoria, forced a 24-hour delay in the resolution of the case.

Mr Nathwani is a King’s Counsel, a title reserved for the highest level of the legal profession in Australia.

For two years, the owner of car services firm Lambency Detailing impersonated eight clients to fabricate five-star customer reviews using ChatGPT on vehicle marketplace Sgcarmart.

Lambency Detailing’s ruse was uncovered when a customer discovered reviews posted under her name and complained to consumer watchdog Competition and Consumer Commission of Singapore.

An investigation into Lambency Detailing’s holding company Quantum Globe was launched in January 2025, and seven other customers’ names, vehicle registration numbers and vehicle photographs were found to have been posted without their permission. Quantum Globe was rapped by the consumer watchdog.

While authors such as Chilean-American writer Isabel Allende and Korean-American journalist Min Jin Lee are real, the books credited to them in a March 2025 summer reading list by Chicago Sun-Times did not exist.

The fabricated titles were generated by AI assistant Claude after a freelance writer used the tool to compile the reading list, which also included real books.

After the incident, the newspaper said it would indicate when content comes from a third party, and also reviewed its relationships with contractors to ensure they meet newsroom standards.

Air Canada was ordered to pay more than C$800 (S$740) after its chatbot incorrectly assured a passenger rushing to attend his grandmother’s funeral that he could book an air ticket at full price and seek a bereavement discount refund later.

He later discovered the information was inaccurate.

The passenger sued the airline and, in its defence, the airline argued that the chatbot was a “separate legal entity” responsible for its own actions.

A Canadian tribunal rejected that claim, ruling that the airline remained liable for all information published on its website, including that by its chatbot.

AI-made images and videos tend to contain extra fingers or teeth, distortions in the background, and jerky or unnatural movements (weird blinking or facial expressions). Audio playback that does not sync with lip movements or on-screen actions is also a red flag.

Overly polished and repetitive writing are signs of AI-generated text. Buzzwords and jargon are often used by an AI tool to plug gaps in its understanding.

Alarm bells should ring if a text, image or video is missing information about its source, yet makes highly provocative claims. Users can also use image search tools to trace an image’s original source, examine available metadata, and cross-check the material against reputable news outlets or official sources.